Alife News

The Artificial Life Community Newsletter

A word from the team

Welcome to the 5th issue of the ALife Newsletter. We have been gaining some momentum over the last few issues, and we are glad to see so many of you enjoying and contributing to this newsletter!

This edition is packed full of great content - from media and conference reviews, news from some recent competitions, and some more information about our upcoming podcast. Be sure to check it all out and let us know what you think.

The Newsletter is distributed by email, and archived on the International Society for Artificial Life's website.

You can subscribe here.

Contribute to any section of the next newsletter here!

Lana, Imy, Mitsuyoshi, Claus and Katt.

- Alife Art: Project ALIEN

- Project Highlight: Fingerpainting Fitness Landscapes

- Project Highlight: MFM.rocks

- Competition Highlight: Evocraft

- Media Review: "Picture A Scientist" documentary

- Conference Review: ACM/IEEE HRI (Human-Robot Interaction) Conference 2022

- Results of the Fiction Science Contest

- Upcoming Deadlines:

- ALife Community Podcast: Call for Questions

- Call for Volunteers

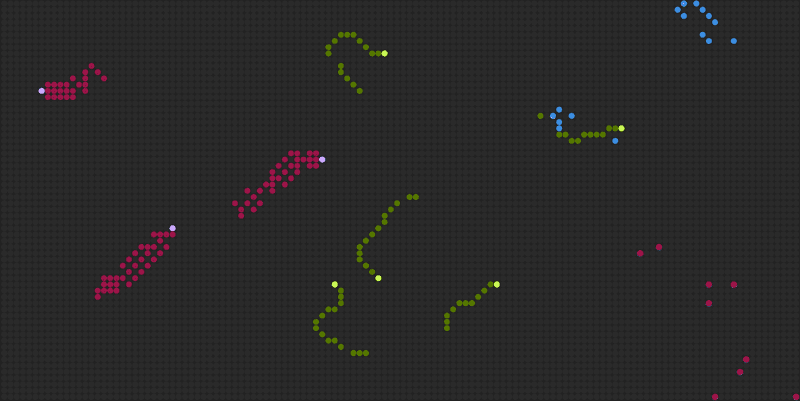

Alife Art: Project ALIEN

Contributed by Christian Heinemann

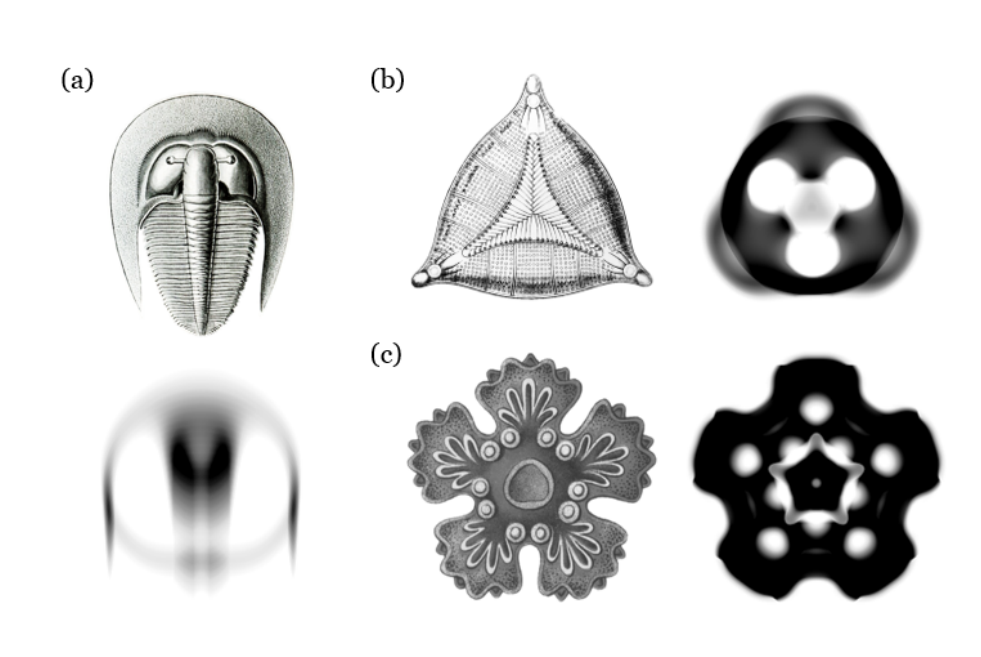

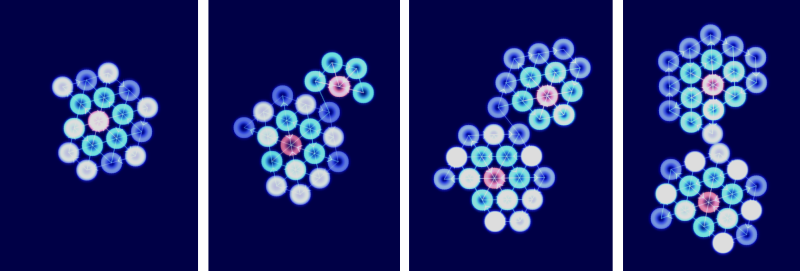

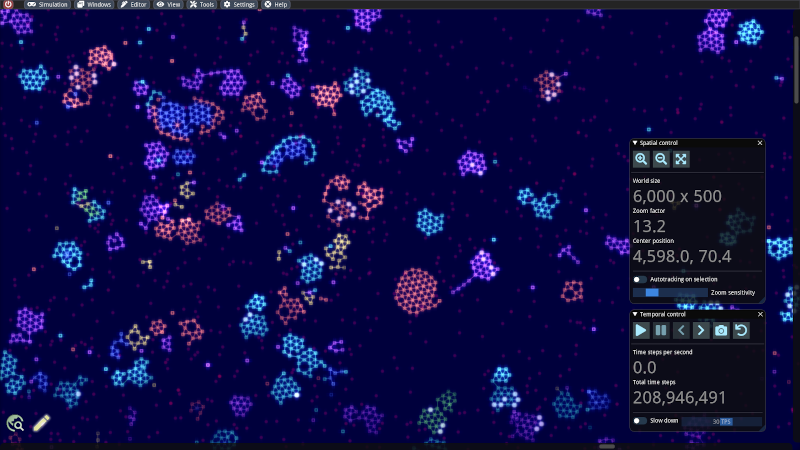

ALIEN is an open-source artificial life project which aims to bring together particle-based simulations for soft bodies with a programming model for distributed systems. In this context, agents are networks of connected particles, where the nodes possess capabilities that could be attributed to robotic or biological components: sensors, muscles, computational units and much more. The communication inside a particle network is performed by signals consisting of stateful and transient entities. They facilitate more complex behavior patterns such as food seeking, self-replication, controlled movements, attacking and digestion of resources, swarming behavior, etc. to be implemented in the networks. The simulator comes along with various examples ranging from pure mechanical to evolution simulations to play with. Own particle machines can be constructed with the built-in graph editing and programming environment. For instance, a self-replicating machine provided with enough resources could work as follows:

From left to right: a possible replication cycle of a particle machine The replication process for this machine takes place in such a way that, starting from a center node, its own network structure is scanned and reconstructed in a spiral sequence. By injecting such a machine equipped with a functioning metabolism into a pristine world exposed to mutations, evolution simulations can be conducted. If desired, the user of the program can merely act as an observer and adjust some simulation parameters from time to time.

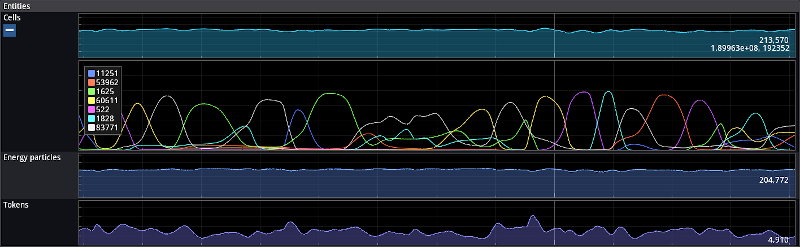

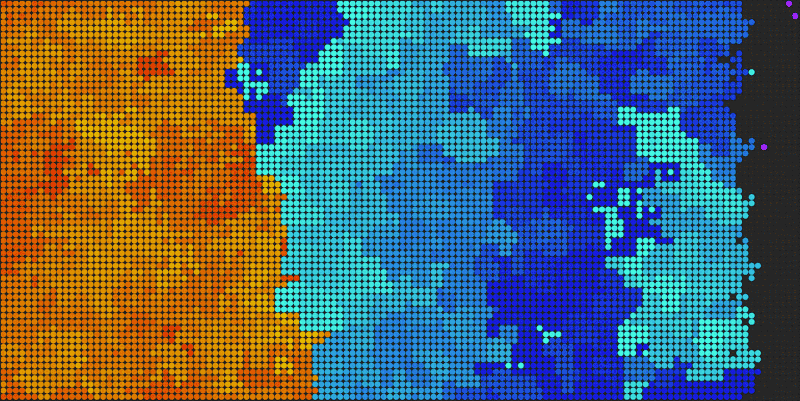

The color of a particle has become more importance in recent updates. There are several color flavors available, and they can play a crucial role in the sensoring and digesting functions depending on the simulation setting. In this long-term diagram taken from the built-in simulation monitor, for instance, one can see how populations of replicators with different colors have gained the predominance at different time epochs.

An objective of this project is to encourage experimentation with worlds full of wonders that keep surprising the observers. ALIEN is powered by an own physics and rendering engine written in CUDA and thus requires an Nvidia graphics card.

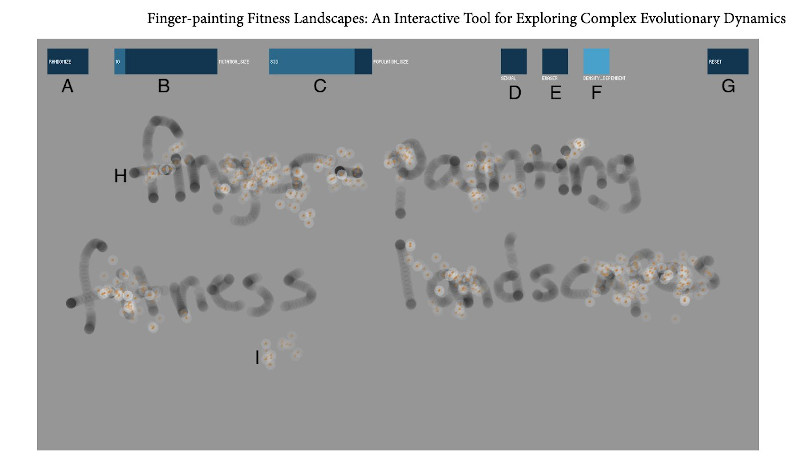

Project Highlight: Fingerpainting Fitness Landscapes

Contributed by Luis Zaman, Original Twitter Thread

While I was a graduate student, I had a bit of fun one weekend and made a touch screen simulation to play with fitness landscapes. @RELenski, @CharlesOfria, and I wrote about this tool back in 2012. Now a version lives online!

You can watch a population evolve in your browser! Here, we're watching the population neutrally move around the fitness landscape. Note how correlations in the fitness landscape emerge from coalescing lineages.

By painting in the canvas, you can create regions of higher fitness and watch as the population climbs new peaks.

You can "poke" the population by erasing regions of high fitness, and watch as individuals seemingly scurry to find high fitness regions!

We can see what happens if fitness is density dependent, where organisms depress the fitness landscape locally. This is depicted (and mechanistically implemented) by lightening the fitness landscape around each individual.

Sexual recombination is a great way to increase variation, but it can also collapse diversity where hybrid organisms "fall off" high fitness regions. We can see that directly in cute fitness landscapes!

And one of my favorite fitness landscape phenomenon, survival of the flattest at high mutation rates, is easy to recreate! We can see the population favor the large but less fit region as we crank up the mutation rate.

I hope to keep adding to this (e.g., coevolution!), but please let me know if you have ideas. I'm certainly planning a few lessons in my classes using this, but would love to hear if others are interested!

(Addendum: Co-evolution has been added!)

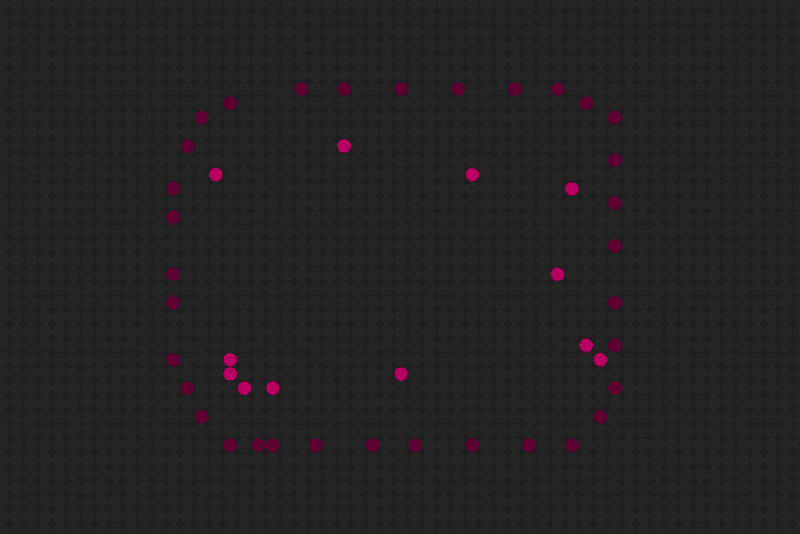

Project Highlight: MFM.rocks

Contributed by Andrew Walpole

If you're unfamiliar with Dave Ackley's Movable Feast Machine (MFM), it's a radically different architecture; an entirely new computational stack of hardware and software. An indefinitely-scalable, non-deterministic, tiled grid system meant to free itself from the fragility and constraints of a centralized computational model. (You can learn more about Dr. Ackley's system via the T2 Tile Project, where over the last 3+ years he has video-documented his journey of building a hardware-based indefinitely scalable MFM grid.)

Mfm.rocks is my playground of sorts; an exploratorium for this uncharted computational model. Not quite having the confidence or know-how to dive into Dr. Ackley’s platform directly, the underlying mfm-js library was born out of an insatiable yearn to explore MFM concepts first-hand. And recently I’ve completely rewritten the library (mfm-js 2.0) to be substantially more performant, allowing for bigger grids and more play!

Most notably, the bug that really bit me was the idea that in a robust-first environment, we will need to lean into living-systems concepts – regeneration, organic growth, reproduction, systems-thinking – in order to build software that can resiliently compute within a hostile digital environment. And while I can’t say there is too much useful computing going on as of yet, exploring lower-level living-systems concepts is unlocking ideas. Here are a few of those elemental explorations.

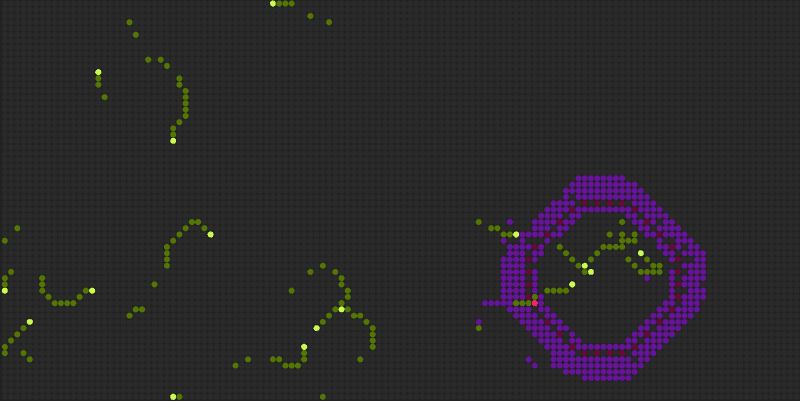

Directionals

Directionals are the name I’ve given to grid travelers that use a sense of understanding directional heading, allowing for the ability to turn left or right or reverse on the grid. Here are a few: Fly, Mosquito, Bird and Wanderer.

Directors

Directors, which can influence the heading of any Directional show how we might create spatial structures that lead a computational workflow.

Looper

Looper is really a culmination of both concepts, a Directional itself, it loops around leaving a trail of goop and Directors that traps any unsuspecting nearby Directional.

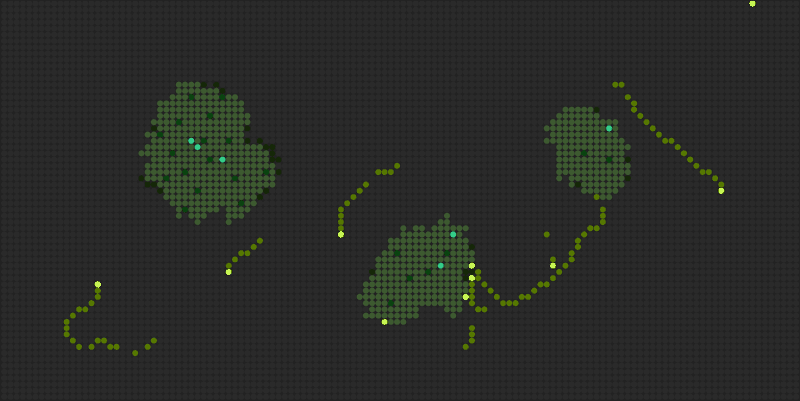

OfSwamp

OfSwamp has a Cell-like composure, but it came aobut as an exploration of Environments and Systems. Swampling will loop around and set up a Swamp environment where all OfSwamp types can freely traverse within, while non-swampkind have a much tougher time intruding. I had approached cell building in the past, but through the lens of an environment the concepts used to put this together ended up being very different.

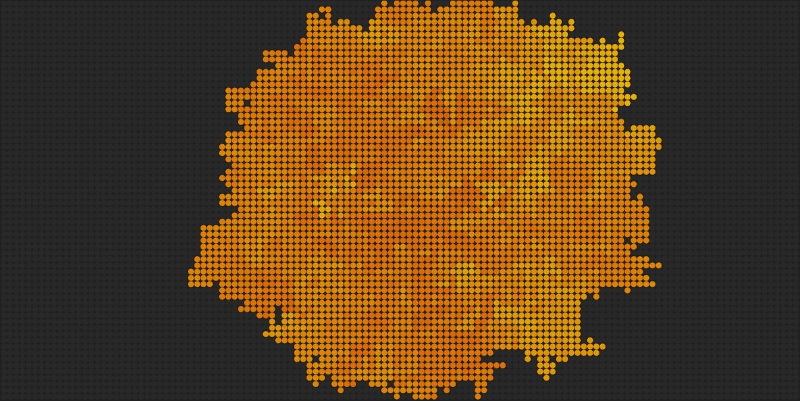

ForkBomb

ForkBomb is sort of the infinite loop of the MFM grid, unabashedly consuming everything in its endless wake.

AntiForkBomb and Sentry

But Sentry, who spits out AntiForkBomb, can easily put a stop to this menace.

Living Wall

While some structures that are less robust easily get wiped out by ForkBomb, a Living Wall structure knows how to heal itself a bit as long as there are a few Sentry to keep it from getting entirely overridden.

It’s only been a few months of working with mfm-js 2.0 and I’m only just getting started with this new set of living-systems experiments. I would like to explore more around regeneration, and building computational systems using specialized structures and elemental environments on the grid. There are also more elements and demos available over on the 1.0 version (most notably, SwapWorm and CellBrane) which is still available to check out at https://mfm.rocks/v1/ as well as the game, Dungeon Grid, I built on top of the mfm-js library https://mfm.rocks/v1/game/. The project is open source and I welcome anyone interested in building elements to jump in. If you have any questions you can find me, Andrew Walpole, on twitter or the T2 Tile Discord Server!

Competition Highlight: Evocraft

Contributed by Elias Najarro

The purpose of this contest on open-endedness is to highlight the progress in algorithms that can create novel and increasingly complex artefacts. While most experiments in open-ended evolution have so far focused on simple toy domains, we believe Minecraft -with its almost unlimited possibilities- is the perfect environment to study and compare such approaches. While other popular Minecraft competitions, like MineRL, have an agent-centric focus, in this competition the goal is to directly evolve Minecraft builds.

To facilitate the development of submissions, we provide the EvoCraft API: a python interface to Minecraft. EvoCraft is implemented as a mod for Minecraft that allows clients to manipulate blocks in a running Minecraft server programmatically through an API. The framework is specifically developed to facilitate experiments in artificial evolution. The competition framework also supports the recently added "redstone" circuit components in Minecraft, which allowed players to build amazing functional structures, such as bridge builders, battle robots, or even complete CPUs. Can an open-ended algorithm running in Minecraft discover similarly complex artefacts automatically? If you have an API feature that you would like to use that is currently missing, feel free to open an issue on the GitHub repo.

If you want to get familiar with the notion of open-ended algorithms, this is a good starting point: Open-endedness: The last grand challenge you’ve never heard of.

Media Review: "Picture A Scientist" documentary

By Aalok Varma

https://www.pictureascientist.com/

https://www.pictureascientist.com/

It’s well-known nowadays that science is riddled with gender stereotyping and undermining the work of female scientists. So much so that when children are asked to draw a scientist, they mostly draw white men in white lab coats. Gender inequality in science has cost not only the women who couldn’t pursue their passion, but also the scientific endeavour as a whole. “Picture A Scientist” follows some trailblazing women who have not only suffered at the hands of inequality, but have also fought back against it. I would strongly recommend everyone watch this documentary for many reasons. First, it gives a voice to the women who have been either sexually or emotionally harassed by powerful men. Hearing some of the incidents is moving and is perhaps the best way to understand the ground reality of what it’s like to be a woman in science. Second, it provides statistics to appeal to our logical side. Data, after all, is the currency of scientific discourse, and is powerful enough to convince those who don’t want to rely on anecdotal evidence alone. For instance, Nancy Hopkins at MIT, who was denied extra lab space while her junior male colleagues were given larger lab spaces, went about measuring everyone’s lab spaces and provided concrete data to the administration to prove that there was, indeed, systemic gender inequality. It was only then that the administration at MIT took the problem seriously and put in measures to reduce their inequality footprint. Lastly, I think the documentary provides new information even to those who may be aware of the problem and also its more insidious sides. For instance, I learnt about a psychological test (called the Implicit Association Test) that demonstrates that even those of us who are acutely aware of gender inequality and consciously put in efforts to combat it suffer from unconscious or implicit biases that contribute to the problem. It made me pause and think about how much more ground we have to cover before we really achieve parity, even if we are committed to the cause. All this might make you think that the documentary is depressing, which is partly true. However, it is also hopeful, because it showcases the solidarity people are showing in addressing the issue and bringing about lasting change. Do check it out. I think it would be a rewarding watch.

"Picture A Scientist" is currently available for streaming on Netflix.

Conference Review: ACM/IEEE HRI (Human-Robot Interaction) Conference 2022

Contribution by Imy Khan (@imy_tk)

The ACM/IEEE HRI (Human-Robot Interaction) Conference is an annual conference on, as the name suggests, all things related to human and robot interaction. Like ALife, HRI brings together a diverse set of researchers: from computer scientists, AI researchers, roboticists, engineers, psychologists, and behavioural and social scientists. As seems to be the way of many conferences at the moment, this year’s conference was run entirely online, originally moving from its intended location of Sapporo, Japan. This is the third time it has moved online, with next year’s conference hopefully being conducted in-person in Stockholm, Sweden. Here’s hoping…!

The virtual conference itself was hosted using Hopin (www.hopin.com): a platform designed for hosting virtual events. This was my first experience using Hopin, with other conferences using platforms like Zoom, Gather.Town, and so on. Unfortunately, this experience was not a particularly good one. While the HRI conference is, traditionally busy, and attempts to pack in a lot of content over the course of the conference, there just seemed to be too much going on, and Hopin’s interface appeared to be quite clunky when navigating between sessions or finding key information. There were many “virtual stages”, running parallel workshops and special sessions along with an industry expo area, but no clear information on precisely what was happening in any of these stages. There was a persistent chat room embedded into the platform, too. While this seems like a good idea to have, it descended into researchers using it promote their workshops, special sessions, or their submissions, rather than a communal forum for discussion. Quite simply, this didn’t work, despite the fact that it probably should have (bring back IRC!). Overall, I don’t think Hopin was a particularly good platform to use, and it made engagement with some parts of the conference quite frustrating. This is, of course, my own personal opinion on the platform (not a reflection on the conference), and others may have really enjoyed the Hopin experience.

Though the conference overall was excellent, with far too many exciting talks to summarise in a single review, the key highlight for me was in Dr. Friederike Eyssel’s keynote - “What’s Social about Social Robots? A Psychological Perspective”. She concluded this talk by talking about research practices in HRI in a post-COVID19 world: that we should recognise that some countries and researchers have bit hit more by (restrictions imposed by) COVID-19 than others and that we should remain sensitive to this when we evaluate their research productivity, research methods, and their outputs. Because of these (ongoing) restrictions, many researchers may not have access to the same tools, methods, or platforms (in this case, complex, autonomous robots), and we should re-evaluate the way that “robot” research is being conducted. This may be of particular interest to researchers in Artificial Life, as it means that the use of simulations or virtual agents may, once again, be valued in these fields where they have, over time, fallen out of favour or have not been granted the space over other methods. So for ALifers who may be interested in expanding their dissemination or research options, it is plausible that the HRI community may be looking for researchers like us to help them give a (semi-)fresh lens to their research.

One interesting standout at this year’s conference was the number, and diversity, of social events on offer. These included virtual laboratory tours (via slides and videos, although a VR-component could have been very cool), a yoga session, a Dungeons & Dragons one-shot campaign, a Judo workshop(!) and a karaoke night, where attendees were asked to partake in karaoke virtually. While I commend the idea, I did not attend that particular event, so I have no idea how well it would have transposed in a virtual setting. Nonetheless, I wanted to make mention of these social events as it is clear that many communities, like ours, are attempting to capture the social component of conferences; and are finding incredibly quirky and creative ways to go about it.

One additional note is that the conference, as I believe it has done for a few years, also offers a “fee waiver” option for first-time attendees, students, or researchers from a selection of socio-economic backgrounds. While many conferences offer reduced/waived fees for the latter two, it was my first time seeing waived fees for first-time attendees. This is a great marketing tool for HRI: expanding the network and grabbing the attention of researchers who may, otherwise, not have been interested in attending, and who may find ways to contribute to the field in the future.

Overall, HRI was a great conference despite the (poor) platform it was hosted on. While I don’t necessarily believe that it would be relevant to all ALifers, it seems like the research methods for HRI research could be swinging back in the direction of simulations and virtual agents. If HRI offer a “fee waiver” option next year (and a virtual option, too), I would encourage ALifers to take a look at attending - and perhaps even finding ways where your current work might fit into the world of human-robot interactions.

Results of the Fiction Science Contest

When I first thought of doing the Fiction Science Contest, I knew what the goal would be. You know when your code is not running and you find 10 old, unrelated, and so far undetected bugs before solving the real issue? The real bug was only a useful pretext: if you look for problems, you will find them. I wanted to create an occasion for science to find undetected bugs in its commonly accepted assumptions. I had no idea of what topic to choose, but thankfully my biologist colleague was inspired and that is how we came with the idea of fictional viruses hopping from the biological to the artificial world and vice versa. I recruited the famous-but-anonymous neuro-blogger and twitter critic (Neuroskeptic)[https://twitter.com/Neuro_Skeptic] as a 3rd judge and we launched the contest.

We received 11 entries, 1 of which was generated by an AI. A recurrent topic in many entries was mRNA sequencing. Our participants imagined ways to exploit vulnerabilities in the computers used to analyse data from viruses: as the most obvious entry point of outside information, sequencing was a prime candidate for malicious tinkering. But some entries also wondered if natural evolution could cause feedback loops between computers and biological organisms. And finally, some entries imagined less nefarious motives for cross-domain infection: research!

See the ranking and read the entries here.

And let us know what you think: far fetched? Plausible? Threatening? Did any of them changed the way you think about a given phenomenon? And of course, if you publish your impressions (blog / twitter / paper...) or have your own story to submit, we'll be glad to add the link to this page. Contact fiction.for.science {at} gmail.com

Finally, congratulations to the winners:

-

Romain from France for "Bio-infection through computer virus heavily suspected", winner for Prompt 1: First Case of Biological Organism Infected by Computer Virus.

-

Claus ARANHA and Diego ARANHA (No relation) for "Nature finds a way", winner for Prompt 2: Computer Virus Nicknamed 'Akabake' Believed to Have Biological Origins. This submission got the highest score all categories.

Upcoming Deadlines:

- ALIFE Workshops -- Check the deadline for each workshop

- Animals to Animats -- May 9th

- Open Ended Evolution Challenge at GECCO2022 -- June, 15th

- Virtual Creatures Competition -- July, 1st

ALife Community Podcast: Call for Questions

We are happy to announce that we will be recording the first episode of the ALife Community Podcast in May. Our first guest is due to be the current president of the ISAL board, Dr. Charles Ofria. We are looking for questions from the community to put forth to Dr. Ofria. If there is anything you would like to ask him, please email imytkhan {at} gmail.com with the subject "ALife Podcast Questions", or send a DM on Twitter to Contribution by Imy Khan (@imy_tk) .

Call for Volunteers

-

The newsletter is looking for volunteers to curate content and come up with new ideas! Contact lana.sinapayen {at} gmail.com

-

The Encyclopedia of Artificial Life is live and needs contributions!

-

How cool would it be to have an ALife podcast? If you are interested in helping out contact imytkhan {at} gmail.com